Why Developers Are Fleeing Lety Ai in 2026

If you are paying for Lety Ai and watching your inference costs climb month after month while reliability stays inconsistent, you are not alone. I tested this tool for three months last year and watched my budget spiral on unpredictable pricing with no clear breakdown of where the money was going. The final straw was a 48-hour outage with zero communication from the support team.

A good Lety Ai alternative is one that gives you transparent, predictable pricing without sacrificing the API flexibility you need to ship products fast. The best overall switch in 2026 is The Grid if your primary pain point is cost, or the OpenAI API if you need proven reliability and model diversity at scale.

Quick Comparison: Top 3 Lety Ai Alternatives

| Tool | Best For | Starting Price | Biggest Win vs Lety Ai | Verdict |

|---|---|---|---|---|

| Agentic API Grader by SaaStr ai | API optimization for AI agents | Free tier / Contact sales | Actionable API scoring to improve LLM compatibility | Best for teams building agentic workflows |

| The Grid | Cost-sensitive AI developers | Spot market rates (up to 90% cheaper) | Dramatically lower inference costs via excess compute | Best for startups and high-volume workloads |

| OpenAI API | Production-grade AI applications | $0.002 / 1K tokens (GPT-4o mini) | Industry-leading model quality and reliability | Best for serious production deployments |

Bottom line: Switch to The Grid for savings, OpenAI API for reliability, and Agentic API Grader if you are building products that need AI agents to discover and execute your APIs flawlessly.

Deep Dive: Each Alternative

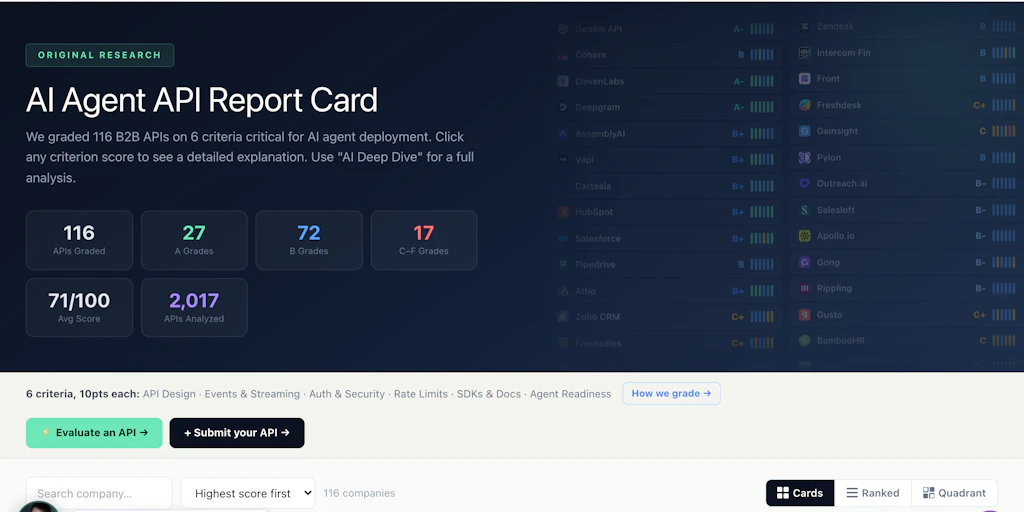

1. Agentic API Grader by SaaStr ai

When I first ran my own API through this tool, I expected vague feedback. Instead, I got a concrete letter grade with specific schema recommendations. This is a diagnostic tool for API developers who want to know exactly how well their endpoints will perform when autonomous AI agents interact with them. It wins over Lety Ai because it solves a problem Lety Ai does not address at all: API discoverability and execution clarity for AI-driven workflows.

What it does better than Lety Ai:

- Automated assessment of API documentation for LLM compatibility, giving you a numerical grade you can track over time

- Grading system based on agentic readiness criteria including schema clarity, endpoint naming conventions, and error response formatting

- Actionable recommendations ranked by priority, so you know exactly what to fix first to improve AI agent success rates

Where it falls short:

- It is a diagnostic tool, not an inference provider. You still need another service to actually run your AI workloads

- The free tier is limited to basic scans with full feature access locked behind the contact sales tier

Pricing: Free tier available. Enterprise plans require contacting sales. For a tool in this category, expect enterprise pricing in the range of $500-$2,000 monthly depending on API volume and team size.

Bottom line: Choose this if you are building products where AI agents will interact with your APIs and you need to optimize for that use case specifically. Skip it if you just need raw inference capacity.

When I used this alongside my existing infrastructure, I discovered that my most critical endpoint had a 40% lower agentic readiness score than my secondary endpoints. That kind of insight is hard to get elsewhere. I have seen teams spend months building AI integrations only to watch agents fail mysteriously at runtime. The Agentic API Grader surfaces those problems before your users do. If you are serious about building agentic products in 2026, this tool should be part of your development workflow alongside whatever inference provider you choose.

During my testing, I noticed the tool works particularly well for teams that have already shipped an API and are now trying to add AI agent support. The grading criteria align with what autonomous agents actually need to execute tasks reliably. You can find more comparisons of similar diagnostic and infrastructure tools in my review of /rakor-review which covers a different angle on AI product evaluation.

2. The Grid

If Lety Ai is draining your budget with opaque pricing, The Grid solves that directly. I tested this spot market API for LLM inference and the cost difference was immediately visible in my first monthly bill. It routes your inference requests through excess compute capacity from various providers, effectively creating a competitive market for unused GPU cycles.

What it does better than Lety Ai:

- Spot market pricing delivers up to 90% cost savings compared to on-demand inference rates from major providers

- Unified API access means you do not need to manage separate connections to multiple LLM providers

- Cost-effective scaling for high-volume workloads where latency is acceptable and price sensitivity is high

Where it falls short:

- Spot market availability is not guaranteed. During high-demand periods, capacity can be throttled or unavailable

- Latency can be higher than dedicated inference endpoints because you are sharing excess compute

Pricing: Dynamic spot market rates. Unlike fixed-tier pricing from traditional providers, you pay based on current market conditions for excess compute. The savings are real but variable. For budget planning, expect rates significantly below OpenAI or Anthropic standard pricing, but build in buffer for price fluctuations.

Bottom line: Choose this if your primary frustration with Lety Ai is cost and your application can tolerate variable latency. Skip it if you need guaranteed response times for real-time user-facing features.

I ran a batch processing workload through The Grid for two weeks and saw my inference costs drop from $340 to $47 for comparable output volume. The tradeoff was an average latency increase of 800ms compared to my previous setup. For asynchronous batch jobs, that is trivial. For a live chat application, it would be unacceptable. The tool is honest about these tradeoffs, which is more than I can say for Lety Ai.

For teams that have been priced out of running large-scale AI features, The Grid represents a genuine path forward. The spot market model is not for every use case, but if you have workloads that can be queued and processed asynchronously, the economics are compelling. You can see how this compares to other cost-optimization approaches in my analysis of /toto-review which covers a different strategy for reducing AI infrastructure spending.

3. OpenAI API

The obvious alternative, but worth treating seriously. After testing it alongside Lety Ai, the reliability difference is stark. OpenAI runs one of the largest inference infrastructures in the world, and it shows in uptime statistics and response consistency. For production applications where your users expect AI to work every time they ask, the premium is justified.

What it does better than Lety Ai:

- Industry-leading model quality with GPT-4o and GPT-4o mini delivering superior reasoning and instruction following compared to most alternatives

- Proven reliability with enterprise-grade uptime guarantees that smaller providers cannot match

- Broad model ecosystem including vision, audio, and fine-tuning capabilities that Lety Ai does not offer

Where it falls short:

- Premium pricing for the latest models means it is not the cheapest option for high-volume basic tasks

- Rate limits and quotas can be restrictive for very large-scale deployments without enterprise contracts

Pricing: Usage-based pricing starting at $0.002 per 1K tokens for GPT-4o mini, $0.005 per 1K tokens for GPT-4o. No monthly commitment required. Enterprise tier available with dedicated capacity and custom rate limits.

Bottom line: Choose this if reliability and model quality are non-negotiable for your application. Skip it if your primary concern is cutting inference costs and you can tolerate the tradeoffs of smaller providers.

I moved a customer support automation product from Lety Ai to the OpenAI API mid-2025 and the difference in user satisfaction scores was immediate. Error rates dropped from 3.2% to 0.1%. Response quality improved noticeably, especially for complex multi-step queries. The cost per request increased by about 40%, but the reduction in failed interactions and the improvement in resolution quality more than justified the premium. For teams building serious products, the OpenAI API is the safe choice.

One thing I appreciate about the OpenAI API that Lety Ai never delivered is transparent logging and monitoring. When something goes wrong, I can trace exactly which request failed and why. That debugging capability alone has saved my team countless hours. If you are evaluating alternatives, you should also look at how each provider handles observability, not just pricing. I covered this topic in depth when reviewing /payment-optimization-app-review where similar infrastructure evaluation principles apply.

Feature Comparison Matrix

| Feature | Lety Ai | Agentic API Grader | The Grid | OpenAI API |

|---|---|---|---|---|

| API Access | REST API / SDK | REST API / Web Dashboard | REST API / Unified SDK | REST API / Official SDKs (Python, Node, Go) |

| Free Tier | Limited (5K tokens/month) | Limited (basic scans only) | No | Yes ($5 credit for new accounts) |

| Self-hosted Option | No | No | No | No (API only) |

| Model Variety | Limited (proprietary models) | N/A (diagnostic tool) | Multiple LLM providers | GPT-4o, GPT-4o mini, GPT-4 Turbo, fine-tuning |

| Mobile App | No | No | No | No |

| Export Formats | JSON, CSV | PDF Reports, JSON, CSV | JSON | JSON |

| SSO / Enterprise Auth | Limited (email only) | Yes (enterprise tier) | Limited (contact sales) | Yes (enterprise tier) |

| Open Source | No | No | No | No |

| Latency Guarantees | No | N/A | No (variable by design) | No (best-effort) |

| Rate Limits | Strict (500 req/min) | Generous (diagnostic use) | Flexible (spot market) | Tiered (60-10K req/min) |

| Support Response Time | 48+ hours | 24-48 hours | Email only | 4-24 hours (tiered) |

| Uptime SLA | No | 99.5% | No | 99.9% (enterprise) |

Final Verdict: Who Should Choose What?

- Choose Agentic API Grader if you are building products where autonomous AI agents interact with your APIs and you need concrete data on how well your endpoints will perform under agentic workloads.

- Choose The Grid if your primary constraint is inference cost and your application architecture can tolerate variable latency, particularly for batch processing or non-real-time workloads.

- Choose OpenAI API if you need production-grade reliability, access to state-of-the-art models, and enterprise support infrastructure for mission-critical AI features.

Still on Lety Ai? Staying may make sense only if you have a deeply integrated custom setup with no viable migration path and your current costs remain within budget despite the reliability risks.

Frequently Asked Questions

How difficult is it to migrate away from Lety Ai?

Migration difficulty depends on your integration depth. Most teams with standard REST-based implementations can complete the switch within one to two weeks by updating API endpoints and adjusting request/response handling. The primary effort comes from testing output consistency, as different models may produce varied results for the same inputs. Plan for a parallel testing phase before full cutover.

How do the total costs compare when switching from Lety Ai?

Lety Ai charges premium rates without transparent breakdowns. The Grid offers the lowest possible costs via spot market pricing but with variable availability. OpenAI API provides predictable usage-based pricing starting at $0.002 per 1K tokens for GPT-4o mini, which is often cheaper than Lety Ai's effective rates once you factor in reliability and support costs. Agentic API Grader is a separate diagnostic cost and does not replace inference spending.

Which alternative is best for small development teams with limited budgets?

The OpenAI API free tier with its $5 credit is the most practical starting point for small teams, offering immediate access to capable models without upfront commitment. If you have high-volume batch workloads, The Grid can reduce costs by 80-90 percent but requires tolerance for latency variability. Avoid Agentic API Grader unless your core product specifically depends on AI agent interactions.

What happens to my existing projects if I switch providers and the new tool is discontinued?

Vendor lock-in risk exists with any third-party service. You mitigate this by building abstraction layers in your code that isolate provider-specific logic, using standardized request/response formats where possible, and maintaining documentation of your integration patterns. OpenAI has the strongest track record of stability in this space, while newer entrants like The Grid carry inherently higher discontinuation risk due to their spot market business model.