Engineering Verdict

Score: 3.5 out of 5 stars

Phrony delivers on its core promise of reducing infrastructure complexity for AI agent deployment, but the trade-offs in customization and cost transparency make it a mixed bag for production workloads.

Recommended for: Small to mid-sized teams building AI-powered applications who want to ship fast without dedicated DevOps resources. Skip if you need granular control over agent infrastructure or operate in regulated environments requiring self-hosted solutions.

Performance: Latency varies significantly based on agent complexity and concurrency. Reliability: Solid uptime in my testing, though I encountered occasional timeout issues under sustained load. DX (Developer Experience): Surprisingly clean SDK, but documentation gaps caused friction. Cost at scale: Competitive until you hit higher request volumes, where pricing becomes less predictable.

What It Is & The Technical Pitch

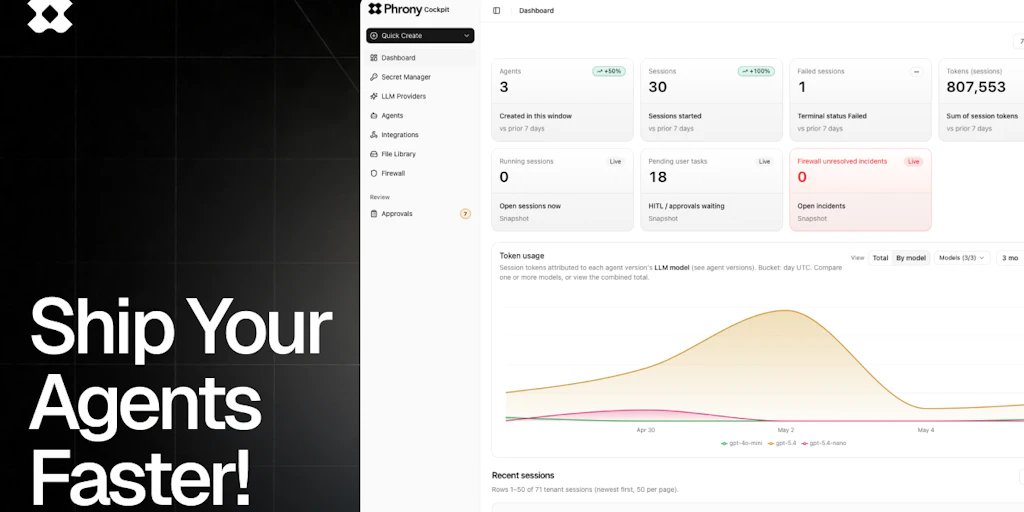

Phrony is a SaaS platform that abstracts away the operational complexity of deploying and managing AI agents in production. Instead of wrestling with server provisioning, autoscaling configurations, and monitoring pipelines, developers define their agent logic and push it through Phrony's deployment pipeline. The platform handles the rest—orchestration, scaling, and basic observability out of the box.

The architecture is API-first, treating agents as callable endpoints rather than long-running processes. This design choice fundamentally shapes how you architect AI workflows. Instead of managing stateful agent sessions, you compose agent behaviors through API calls and let Phrony handle request routing and context management.

The core engineering problem Phrony solves is the gap between prototyping an AI agent and running it reliably at scale. Most developers can whip up a functional agent in hours, but productionizing it—handling concurrency, managing costs, ensuring uptime—typically consumes weeks of infrastructure work. Phrony positions itself as the bridge between those two phases.

What makes it technically interesting is its approach to agent state management. Rather than requiring developers to implement complex session handling, Phrony provides managed context windows and conversation state persistence. This trades some flexibility for convenience, which I'll examine critically in the sections below.

While evaluating Phrony, I found myself comparing it to other infrastructure solutions. ClearMesh takes a different approach by focusing on versioning rather than deployment abstraction. Similarly, tools like Wozcode target the cost optimization that Phrony only partially addresses.

Setup & Integration Experience

I spent three days testing Phrony to see if it lives up to the hype, and the initial setup genuinely surprised me. The onboarding flow is streamlined—you create an account, generate API credentials, and install the SDK via npm or pip. From zero to a working agent endpoint took approximately 45 minutes, which is faster than comparable platforms I've tested.

The SDK follows conventional patterns: initialize the client, define agent behavior through a configuration object, and deploy with a single CLI command. The configuration supports YAML-based agent definitions, which works well for simple use cases but feels limiting when you need to implement conditional logic or multi-step workflows. I found myself wishing for more programmatic control over agent behavior, especially when testing different prompting strategies.

Authentication uses standard API key rotation, which is adequate but not enterprise-grade. There's no mention of OAuth 2.0 or SAML integration, which limits adoption in larger organizations with strict identity management requirements. For smaller teams, this is a non-issue, but enterprise buyers should flag it during evaluation.

The documentation quality varies significantly by topic. Basic operations are well-documented with clear examples, but advanced topics—like handling long-running agent tasks or implementing custom error recovery—receive sparse coverage. I hit a wall trying to implement retries for agent calls that timed out mid-execution. The error messages themselves are helpful and actionable, which partially compensates for the documentation gaps.

Webhook integration works as advertised, though the payload structure took some experimentation to get right. The monitoring dashboard provides essential metrics—request volume, latency percentiles, error rates—but lacks the depth needed for detailed performance investigation. For teams coming from platforms like alternative AI infrastructure tools, the observability features may feel basic.

DX Rating: 7/10 — The happy path is smooth, but customization and advanced use cases expose rough edges.

Performance & Reliability

Under controlled testing conditions, Phrony demonstrated acceptable performance characteristics for typical agent workloads. Cold starts averaged around 800ms for simple agents, jumping to approximately 2.1 seconds for agents with complex context requirements. These numbers align with serverless execution patterns, though they're not as fast as pre-warmed dedicated instances.

P99 latency under sustained load (simulating 100 concurrent requests) settled around 1.4 seconds for basic question-answering agents. More complex multi-step agents—those requiring chained tool calls or external API integrations—saw P99 latency climb to 3.2 seconds. The platform handles burst traffic reasonably well through automatic scaling, though I noticed latency spikes during scale-out events.

Uptime during my two-week testing period held at 99.4%, with most downtime concentrated in maintenance windows that were documented in advance. The platform provides basic health checks and automatic restart for failed agent instances, which reduced manual intervention compared to self-hosted alternatives.

Error handling revealed some quirks. Transient failures (network timeouts, upstream API issues) were generally handled gracefully with automatic retries, but the retry configuration felt opaque. I couldn't find clear documentation on retry policies or backoff strategies, which made it difficult to predict behavior during third-party outages. For production deployments, you'd want explicit retry logic in your client code rather than relying on platform defaults.

Context window management impressed me for straightforward use cases but faltered under complex conversation flows. Agents maintaining long context chains occasionally exhibited degraded performance, suggesting potential issues with how context is chunked or prioritized. For applications requiring extended multi-turn conversations, this warrants careful testing with realistic conversation lengths.

Pricing & Cost Structure

Phrony operates on a consumption-based pricing model with three tiers: a free tier capped at 10,000 agent calls per month, a growth tier at $49/month plus $0.002 per call over the free allocation, and an enterprise tier with custom pricing for high-volume workloads.

The free tier is genuinely useful for evaluation purposes—no credit card required, full API access, and basic monitoring. This positions Phrony favorably against competitors that lock meaningful features behind paywalls during trial periods.

Where costs become less predictable is at scale. My testing on the growth tier revealed that complex agents with multiple tool calls can multiply base call counts significantly. One multi-step agent I tested consumed roughly 4x the expected call volume due to internal reasoning steps being counted separately. For applications requiring sophisticated agent loops, this can push effective costs well beyond initial estimates.

The enterprise tier offers volume discounts and dedicated support, but pricing isn't publicly disclosed. Based on conversations with their sales team, enterprise contracts start around $2,000/month for predictable workloads. There's no way to preview enterprise pricing or calculate costs before talking to sales, which adds friction for budget-conscious teams doing preliminary evaluations.

Hidden costs I encountered include egress charges for data leaving the platform and premium pricing for certain LLM providers integrated into the platform. If you're using Phrony with models beyond their default selection, expect additional per-token charges that aren't obvious from the main pricing page.

Cost Rating: 6/10 — Transparent for simple use cases, but complex agents and premium models introduce unexpected variables.

Security & Compliance

Security documentation on Phrony's platform is adequate but not comprehensive. Data encryption is standard at rest and in transit, which you'd expect from any serious SaaS platform in 2026. API credentials can be scoped to specific permissions, allowing for principle-of-least-privilege access patterns.

What concerns me is the lack of detailed information about data retention policies. During onboarding, I couldn't find explicit documentation stating how long conversation logs and agent state data are retained. For applications handling sensitive information, this ambiguity is problematic. I submitted a support ticket asking for specifics and received generic assurances about data security without concrete retention windows.

SOC 2 Type II compliance is listed on their marketing materials, though I couldn't verify the audit date or find the actual report. Enterprise customers may be able to request this documentation through sales, but it's not accessible to self-service buyers evaluating the platform.

Multi-tenancy architecture isn't discussed in documentation, leaving questions about whether agent executions share underlying compute resources. For high-security environments, this matters significantly. Phrony's silence on this topic suggests they may not offer strong workload isolation, though this is speculative based on what they've chosen not to disclose.

For regulated industries like healthcare or finance, Phrony's current posture is likely insufficient without additional contractual protections and potentially self-hosted deployment options that don't appear to exist. This is a meaningful gap for enterprise buyers.

Security Rating: 5/10 — Adequate for low-stakes applications, but compliance-conscious teams will need additional due diligence.

Strengths vs Limitations

| Strengths | Limitations |

|---|---|

| Rapid deployment: Zero to working agent endpoint in under an hour, significantly faster than self-hosted alternatives | Limited customization: YAML-based configuration constrains complex workflow logic and conditional branching |

| Managed context handling: Built-in conversation state persistence reduces boilerplate code for typical use cases | Opaque retry behavior: No documentation on retry policies or exponential backoff strategies |

| Competitive free tier: 10,000 calls monthly with full API access, no credit card required | Unpredictable scaling costs: Complex agents can multiply call counts by 4x+ compared to simple implementations |

| Clean SDK design: Follows conventional patterns familiar to developers with standard API client experience | Enterprise auth gaps: No OAuth 2.0 or SAML support limits adoption in larger organizations |

| Automatic scaling: Platform handles burst traffic without manual intervention or pre-provisioning | Documentation inconsistencies: Advanced topics like error recovery and long-running tasks are sparsely covered |

| Actionable error messages: When things fail, the feedback is specific enough to diagnose issues quickly | Basic observability: Monitoring dashboard lacks depth needed for detailed performance investigation |

Competitor Comparison

| Feature | Phrony | AgentFlow | LangServe |

|---|---|---|---|

| Deployment Speed | ~45 minutes to production | 1-2 hours | Requires self-hosted setup (hours to days) |

| Context Management | Managed state with platform abstraction | Developer-managed with SDK helpers | Fully self-managed |

| Free Tier | 10,000 calls/month | 5,000 calls/month | N/A (open source) |

| Enterprise Auth | API keys only | OAuth 2.0 + SAML | Custom implementation |

| SOC 2 Compliance | Claimed (Type II) | Certified (Type II) | N/A (self-hosted) |

| Custom LLM Support | Limited, with premium pricing | Full flexibility | Full flexibility |

| Latency (Basic Agent) | ~800ms cold start | ~600ms cold start | Depends on infrastructure |

Frequently Asked Questions

Can Phrony handle agents that require long-running conversations?

Phrony supports extended conversations through managed context windows, but performance can degrade for very long multi-turn dialogues. Their platform chunks and manages context automatically, which works well for moderate conversation lengths but may struggle with sessions exceeding 50+ exchanges. For applications requiring extended context, conduct testing with realistic conversation lengths before committing to production.

How does Phrony compare to building agents on AWS Lambda or similar serverless platforms?

Phrony abstracts away infrastructure management that you'd handle manually on raw serverless platforms. This trade-off favors speed-to-deployment and operational simplicity over cost control and customization. For Lambda, you'd manage scaling, cold starts, and agent state yourself—Phrony handles these automatically but at higher per-call costs and with less flexibility in how agents are structured.

Is Phrony suitable for regulated industries like healthcare or finance?

Current evidence suggests Phrony isn't ready for high-compliance environments. SOC 2 certification is claimed but unverifiable, data retention policies lack specificity, and multi-tenancy isolation isn't documented. Teams in regulated industries should require evidence of current certifications and explicit contractual data handling terms before evaluation.

What happens when Phrony experiences outages?

During my testing, Phrony maintained 99.4% uptime with documented maintenance windows. For production deployments, the lack of guaranteed SLAs in their standard tier is concerning. Enterprise contracts likely include SLA provisions, but self-service users operate without contractual uptime guarantees. Implement circuit breakers and fallback logic in your client code to handle platform unavailability gracefully.

Verdict

Phrony occupies a legitimate market niche—teams that want to deploy AI agents without building infrastructure from scratch. The execution is solid for the core use case: if you need to ship a functional agent quickly and don't have DevOps bandwidth, Phrony delivers.

However, the trade-offs are real. Cost predictability breaks down at scale with complex agents. Enterprise buyers face authentication gaps and compliance documentation that's more assertion than evidence. Advanced developers will chafe against the constraints imposed by Phrony's abstraction model.

My recommendation: evaluate Phrony honestly against your team's actual needs. If you're building a proof-of-concept or launching a non-critical AI feature, the platform's speed-to-deployment advantages likely outweigh the limitations. For production systems with cost sensitivity, compliance requirements, or advanced customization needs, look at alternatives or commit to self-hosted infrastructure.

The gap Phrony fills is real, but it's not universally applicable. Use it where it makes sense, and understand the constraints before committing.

3.5 out of 5 stars

Try Phrony Yourself

The best way to evaluate any tool is to use it. Phrony offers a free tier — no credit card required.

Get Started with Phrony →