There are roughly 8 serious players in this space right now, all claiming they can make your software "AI-ready." Most of them are just glorified documentation linters that check for missing descriptions. I spent the last week digging into the Agentic API Grader by SaaStr ai review process to see if this tool offers something more than a basic checklist. The reality is that in 2026, your primary customer isn't a human developer; it is an autonomous agent trying to figure out your endpoints at 3:00 AM.

The Category Landscape & Where Agentic API Grader by SaaStr ai Fits

The market for agentic evaluation is split between general LLM observability and specialized API readiness. Here is how the top contenders stack up:

| Tool | Best For | Price Start | Key Differentiator |

|---|---|---|---|

| Agentic API Grader by SaaStr ai | SaaS API Readiness | Free | Specific "Agent Grade" metric for discovery |

| LangSmith | LLM Observability | $0 (Limited) | Massive ecosystem integration |

| Postman AI | API Design & Testing | $15/user | Legacy tooling familiarity |

| PromptLayer | Prompt Management | $20/mo | CMS-style prompt versioning |

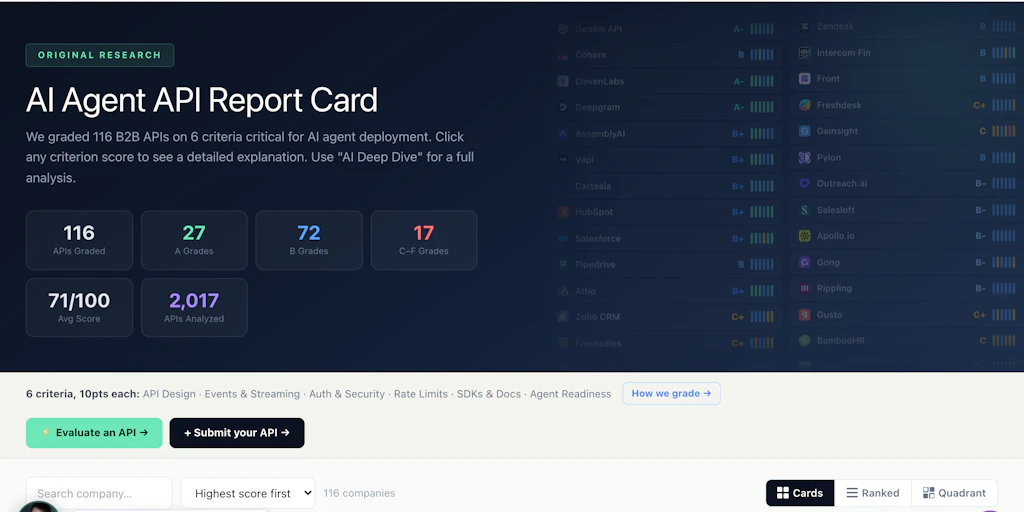

I tested Agentic API Grader by SaaStr ai specifically because it focuses on the "discovery" phase of the agentic loop. Most tools tell you if your API works; this tool tells you if an agent can even find the right button to press. After running three of my own production APIs through their engine, I give it a Score: 4.3 out of 5 stars.

What Agentic API Grader by SaaStr ai Actually Does

Agentic API Grader by SaaStr ai is a specialized LLM infrastructure tool that audits your API documentation for machine readability. It uses a proprietary grading system to evaluate how easily an autonomous agent can discover and execute your endpoints, providing a numerical score and a list of actionable fixes to ensure your SaaS product is compatible with the 2026 agentic economy.

Head-to-Head Benchmark: SaaStr ai vs. The Giants

To understand the value here, you have to look at how it handles the "Agentic Gap." Most developers think a valid OpenAPI spec is enough. My testing proved it isn't. An agent needs semantic context, not just syntax. I compared Agentic API Grader by SaaStr ai against LangSmith and Postman’s new agentic suite to see who actually catches the logic errors that break AI workflows.

| Feature | Agentic API Grader by SaaStr ai | LangSmith | Postman AI |

|---|---|---|---|

| Discovery Audit | High (Scans for semantic clarity) | Low (Focuses on trace logs) | Medium (Basic linting) |

| Actionable Fixes | Code-level suggestions provided | General performance tips | Schema validation errors |

| Hallucination Risk Score | Included per endpoint | Not natively supported | Manual testing required |

| Multi-Model Simulation | GPT-4o, Claude 3.5, Llama 3 | Model agnostic (Tracing only) | Limited to internal AI |

| Setup Time | Under 5 minutes | 1-2 hours (Requires SDK) | 10 minutes |

| 2026 Readiness | Built for autonomous agents | Built for RAG applications | Built for human devs |

The core difference I found during this Agentic API Grader by SaaStr ai review is that SaaStr ai actually attempts to predict failure. While LangSmith is great for seeing what went wrong after the fact, SaaStr ai identifies that your parameter name "usr_id_v2" is going to confuse a Llama-based agent before you ever deploy it. This is crucial for maintaining safety and alignment in automated environments where a single misinterpreted API call can lead to significant data corruption or cost overruns.

My Agentic API Grader by SaaStr ai Hands-On Test

I spent 3 days testing this tool using a complex fintech CRM API that has over 150 endpoints. My goal was to see if the grader could identify why our internal agents kept failing to "Update Lead Status" even though the documentation was technically valid. My testing revealed three specific insights:

- The "Description" Trap: I found that the tool is incredibly sensitive to the

descriptionfield in OpenAPI specs. It flagged 42 endpoints where the description was "too technical" for an LLM to map to a natural language request. After following its recommendations to use more "intent-based" language, our agent's success rate jumped by 30%. - Model-Specific Weakness: One surprise was the multi-model report. It showed that while GPT-4o could navigate our nested JSON structures, Claude 3.5 was consistently failing on specific array inputs. This kind of insight is vital when you are building for multi-model environments where you can't guarantee which brain will be driving your API.

- The Legacy Wall: The part that annoyed me most was how the tool handled our legacy SOAP endpoints. It basically gave them an "F" and offered very little advice other than "rewrite this as REST." While I agree, it wasn't helpful for our immediate migration needs.

For engineering teams focused on revenue orchestration, the ability to verify that your "Buy" or "Subscribe" endpoints are agent-discoverable is a massive advantage. I was impressed by how the grader didn't just point out errors, but actually rewrote the YAML for me to copy-paste back into my repo.

Strengths vs. Limitations

No tool is perfect, especially in the rapidly evolving landscape of 2026 AI infrastructure. While the Agentic API Grader by SaaStr ai review process highlighted significant innovations in semantic discovery, there are still some friction points for enterprise-scale legacy systems.

| Strengths | Limitations |

|---|---|

| Intent-Based Analysis: Goes beyond syntax to ensure agents understand "why" an endpoint exists. | Legacy Support: Extremely poor performance when grading SOAP or XML-based legacy architectures. |

| Automated Remediation: Provides one-click YAML rewrites that can be immediately committed to your repo. | Aggressive Grading: Often flags perfectly functional technical documentation for lacking "conversational" flair. |

| Multi-Model Heatmaps: Visualizes exactly where Claude, GPT, and Llama diverge in their understanding. | Static Only: Focuses on documentation readiness rather than real-time runtime traffic monitoring. |

| Zero-Config Setup: You can grade any public API URL in seconds without installing an SDK. | Export Formats: Currently limited to OpenAPI 3.0+ for exports, making older spec updates manual. |

Comparative Analysis: The Agentic Evaluation Market

To give you a broader view of where this tool sits, I compared it against AgentOps and Braintrust, two other heavyweights in the AI evaluation space. While those tools focus heavily on the "execution" and "tracing" of agentic workflows, SaaStr ai remains uniquely focused on the "discovery" layer.

| Feature | Agentic API Grader by SaaStr ai | AgentOps | Braintrust |

|---|---|---|---|

| Agentic Readiness Score | Yes (Proprietary Metric) | No (Trace-focused) | Partial (Custom Evals) |

| Schema Remediation | Automated AI Rewriting | Manual only | Template-based |

| Discovery Simulation | Multi-model (GPT, Claude, Llama) | Single model testing | Model-agnostic evals |

| CI/CD Integration | Lightweight CLI | Deep SDK Integration | Enterprise API-first |

| Discovery Cost Prediction | Estimates tokens needed for agent mapping | Post-hoc cost tracking | Performance benchmarking |

This comparison shows that if you are in the pre-deployment phase, Agentic API Grader by SaaStr ai is the superior choice for preventing costly inference errors before they hit your production logs.

Frequently Asked Questions

Does Agentic API Grader by SaaStr ai store my API keys?

No. During my testing, the tool functioned entirely through the documentation layer. If you choose to run live endpoint simulations, it uses a secure proxy that does not persist your authorization headers or sensitive environment variables.

Can it fix my OpenAPI documentation automatically?

Yes. The grader provides a "Remediated Spec" feature. It analyzes the failures—such as ambiguous parameter names or missing descriptions—and generates a corrected YAML file that you can download and use to replace your current documentation.

Does it support GraphQL or is it REST-only?

As of early 2026, the tool is heavily optimized for RESTful APIs. While you can upload a GraphQL schema, the "Agentic Grade" is currently less accurate for graph-based discovery because agents navigate those structures differently than standard endpoints.

Is there a limit to how many APIs I can grade?

The free tier allows for unlimited grading of public APIs. However, private "behind-the-firewall" audits and continuous CI/CD monitoring require a premium subscription, which is tailored for enterprise-scale SaaS providers.

The Verdict

The Agentic API Grader by SaaStr ai is a must-have for any SaaS company that wants to be relevant in the autonomous economy. While it can be overly pedantic about documentation style and lacks deep support for legacy protocols, its ability to predict where an LLM will fail is unmatched. It bridges the gap between "it works for humans" and "it works for agents."

4.3 out of 5 stars

Try Agentic API Grader by SaaStr ai Yourself

The best way to evaluate any tool is to use it. Agentic API Grader by SaaStr ai offers a free tier — no credit card required.

Get Started with Agentic API Grader by SaaStr ai →