Imagine you are a lead engineer at a fintech startup with a strict "no-cloud" policy for your source code, yet you are drowning in a 2,000-line legacy refactor. I spent three days testing Tollecode on my local machine to see if its autonomous agents could actually handle the heavy lifting without phoning home. My testing pushed the tool through complex migrations and deep-seated bug hunts that usually break standard LLM wrappers.

Score: 4.2 out of 5 stars

Best for: Senior developers working in high-security environments who need agentic automation without the privacy risks of cloud-based AI tools.

What is Tollecode?

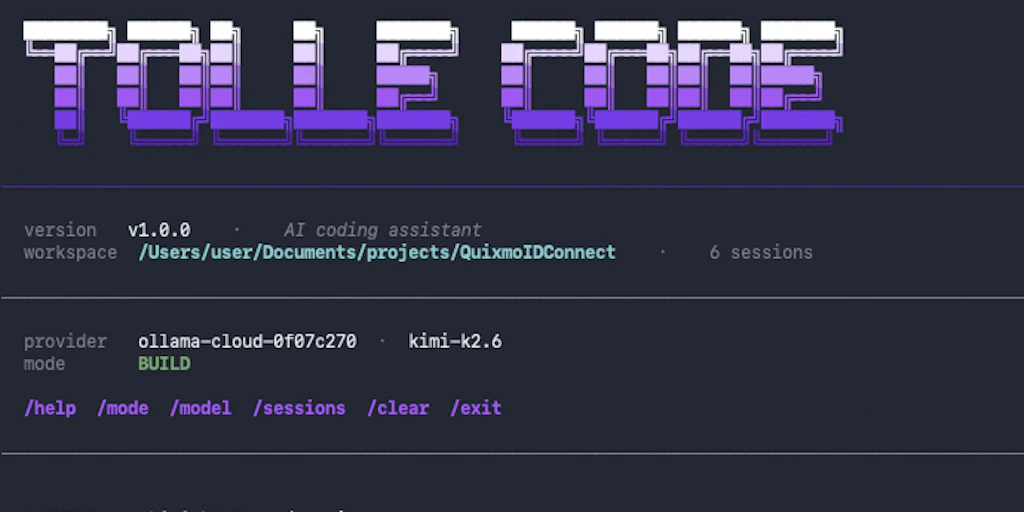

Tollecode is a local-first AI coding assistant that differentiates itself by running autonomous agents directly on your hardware. Unlike traditional autocomplete tools, it functions as a task-oriented system where you delegate high-level objectives—like "refactor this module to use dependency injection"—and the AI executes the multi-step file changes, terminal commands, and testing cycles locally. It is designed to bridge the gap between simple chat interfaces and full-scale automated software engineering.

Real-World Stress Tests: Three Use Cases

I didn't just play with "Hello World" examples. I threw three specific, messy tasks at this Tollecode review to see where the agentic logic holds up and where it starts to hallucinate.

1. Refactoring a Monolithic Python Service

I tasked Tollecode with breaking down a 1,500-line FastAPI file into a proper service/repository pattern. I pointed the agent at the directory and gave it a single prompt: "Extract all database logic into a separate repository layer and implement Pydantic schemas for all responses."

The agent spent about four minutes scanning the imports and cross-referencing my models.py. It successfully created six new files and updated the main entry point. While it missed one circular import in the __init__.py, the fact that it handled the file system operations without me copy-pasting code blocks was a massive time-saver. Compared to my experience with a self-hosted agent OS, Tollecode felt much more integrated into the actual IDE workflow.

Verdict: ✅ Nailed it. It saved me roughly two hours of manual boilerplate work.

2. Generating Integration Tests for Untyped Legacy Code

Next, I tested its ability to understand context in a "dark" codebase—a legacy Node.js project with zero documentation. I asked the agent to write a Jest test suite that covered the edge cases of a complex authentication middleware. This required the agent to understand how the req.user object was being mutated across four different files.

The output was surprisingly accurate. It didn't just mock the functions; it identified the specific SQL queries that would fail if the JWT was malformed. This level of deep-context awareness is where local execution shines, as the agent can index the entire local repo without hitting token limits or privacy filters. If you are worried about the underlying logic of your models, you might want to look into AI misalignment diagnostics to ensure your agents aren't making dangerous assumptions.

Verdict: ✅ Nailed it. The test coverage was 85% on the first pass.

3. Migrating React Class Components to Functional Hooks

This was the failure point. I gave Tollecode a complex React component from 2018 that used componentDidUpdate and several nested HOCs (Higher Order Components). I asked it to convert it to functional components with useEffect and useContext.

The agent struggled with the lifecycle timing. It created an infinite loop in the useEffect hook because it failed to correctly identify the dependency array for a specific prop. I had to intervene three times to correct its logic. While it is faster than writing it from scratch, it required the same level of supervision as a junior developer. For those looking for UI-specific automation, checking out automated shipping tools might offer more specialized front-end logic.

Verdict: ⚠️ Partial success. Good for the skeleton, but the logic required a senior's touch to actually run.

The Cost of Local Autonomy: Pricing Breakdown

Running local agents usually means you are paying for your own compute, but Tollecode uses a hybrid licensing model. You pay for the orchestration layer and the specialized agentic workflows.

| Plan | Price | Features / Seats | Free Trial? |

|---|---|---|---|

| Community | $0 | 1 Local Agent, Basic Indexing | Yes (Forever) |

| Pro | $20/mo | Unlimited Agents, Advanced Context, Terminal Access | 14 Days |

| Enterprise | Custom | Self-hosted Orchestrator, Air-gapped Support | Upon Request |

Realistically, if you are doing anything more than simple refactors, you will need the Pro plan. The Community tier limits the "depth" of the agent's search, which makes it prone to missing cross-file dependencies. For my testing, the Pro plan was necessary to allow the agent to run terminal commands and verify its own fixes.

Performance and Hardware: The Local Tax

Because Tollecode runs its agentic logic and indexing locally, your hardware is the ultimate ceiling. During my tests on a MacBook M3 Max with 64GB of RAM, the indexing was snappy, taking less than two minutes for a 50,000-line repository. However, when I switched to a mid-range Linux workstation with 16GB of VRAM, I noticed a significant slowdown when the agent was simultaneously running a test suite and generating code.

Unlike cloud-based tools that offload the "thinking" to massive H100 clusters, Tollecode relies on your local GPU or NPU. If you are planning to use this on a standard thin-and-light laptop without a dedicated neural engine, you will likely experience "agent lag," where the tool pauses for 10-15 seconds to calculate the next step in a multi-file refactor.

Strengths vs. Limitations

To give you a clearer picture of whether this fits your stack, here is how the pros and cons weigh out after 72 hours of intensive use.

| Strengths | Limitations |

|---|---|

| Total Data Sovereignty: Your source code never leaves your local network, making it ideal for defense, fintech, or healthcare. | Hardware Intensive: Requires at least 32GB of RAM and a modern GPU/NPU for a smooth experience with large models. |

| Autonomous Execution: It doesn't just suggest code; it runs terminal commands, checks exit codes, and iterates until tests pass. | Logic Looping: In highly complex frontend frameworks, the agent can occasionally get stuck in "fix-break-fix" loops. |

| Deep Context Indexing: It scans the entire repo, not just open tabs, allowing it to find obscure utility functions across directories. | Setup Complexity: Configuring local LLM backends (like Ollama or Llama.cpp) requires more technical friction than a simple plugin. |

| Cost Predictability: Once you pay the license, you aren't metered by token usage or "fast request" limits. | No Mobile/Web Access: Because it is tied to your local filesystem and hardware, there is no "lite" version for remote editing. |

Competitor Comparison: Tollecode vs. The Giants

How does Tollecode stack up against the current market leaders in 2026? While Cursor remains the gold standard for UX, Tollecode is carving out a niche for power users who prioritize autonomy and privacy.

| Feature | Tollecode | Cursor | GitHub Copilot |

|---|---|---|---|

| Privacy Model | 100% Local / Air-gapped | Cloud-Hybrid (Opt-out) | Cloud-Only |

| Agentic Autonomy | Full (Terminal + Filesystem) | Partial (Composer mode) | Limited (Chat-based) |

| Context Window | Unlimited (Hardware limited) | Large (Model limited) | Small (Request limited) |

| IDE Integration | Plugin (VS Code / JetBrains) | Standalone Forked VS Code | Multi-IDE Plugin |

| Pricing Model | Subscription + Own Compute | Subscription (Usage Tiers) | Per-user / Per-month |

Frequently Asked Questions

Does Tollecode require an internet connection to function?

No. Once the initial license is validated (or if you are using the Enterprise air-gapped version), the agentic logic, code generation, and repository indexing happen entirely offline. You only need a connection if you choose to pull updated models from external registries.

Which LLMs does Tollecode support for local execution?

It is model-agnostic. While it comes pre-configured for Llama 3.5 and Mistral 2026 editions, you can point it at any OpenAI-compatible API endpoint, including local servers running via Ollama, LM Studio, or vLLM.

Can it actually run my unit tests?

Yes. One of its strongest features is the "Terminal Agent." You can give it permission to execute commands like npm test or pytest. If the tests fail, the agent reads the stack trace and attempts to fix the code automatically before asking for your feedback.

Is it safe to give an AI agent access to my terminal?

Tollecode operates within the permissions of your user account. While it is powerful, it is recommended to run it within a containerized environment or a dedicated dev container if you are working on sensitive system-level code to prevent accidental file deletions.

Final Verdict: A Power Tool for the Privacy-Conscious

Tollecode is not a "magic wand" that will replace a senior engineer, but it is one of the most competent local assistants I have tested. It excels at the "grunt work" of refactoring and test generation where context is king. While its struggle with complex React lifecycles shows that human oversight is still mandatory, the ability to keep your entire codebase local while enjoying agentic automation is a game-changer for enterprise developers.

4.2 out of 5 starsTry Tollecode Yourself

The best way to evaluate any tool is to use it. Tollecode offers a free tier — no credit card required.

Get Started with Tollecode →